Intellectual Honesty in the Age of Vibes

I didn't know intellectual honesty was a skill until it started appearing in my performance reviews.

The first time I encountered it as a company value, it was called “intellectual honesty” The expectation was that you do the deep dive, that you seek the truth, that you don’t stop at the first plausible explanation. I was told I was good at it. In my last company, at CloudKitchens, it came up again in my performance reviews, but under a different name: “truth seeking”. Same idea, different label. I remember reading it and thinking, sure, obviously. Isn’t that just engineering? You find the bug, you find the root cause, you fix the thing. But over time, I realized something: not everyone treats this as the default.

For many engineers, it’s one approach among many. For some engineers, it is the only approach. They need to know how things work to actually fix them. Not roughly. Not “the docs say this” Actually know. Read the source, tcpdump the traffic, go as low as possible.

Most companies have some version of this value, they just name it differently. Atlassian calls it “Open company, no bullshit.” Netflix calls it “Candor.” Amazon calls it “Dive Deep,” being skeptical when metrics and anecdotes don’t match. Bridgewater calls it “radical truth and radical transparency.” The language varies. The underlying idea is the same:

“Have the courage to see things as they actually are, not as you wish they were”

Every one of these companies felt the need to put this in writing because the natural human tendency runs the other direction.

I never thought of it as a philosophy. It was just how I operated. That was the joy of work actually. But then I started sitting in incident reviews where sometimes blame was shifted sideways, where the postmortem became a performance instead of a diagnosis. Not because people were malicious, but because investigating further would mean challenging assumptions they were comfortable with. The database was fine. The config was correct. The deploy went smoothly. It always went smoothly. Nobody wanted to be the person who pulled the thread, because pulling the thread meant admitting the thing they trusted was the thing that broke.

And it wasn’t just postmortems. I sat in design reviews, architecture discussions, planning meetings where everyone nodded along and moved on. Not because they agreed, but because asking a real question carried risk. Ask “why are we doing it this way?” and you might come across as rude, as not a team player, as the person who slows things down. And the worse: your question might reveal that you also don’t fully understand the thing you’re supposed to own. So you nod. Everyone nods. The meeting ends. The assumption survives.

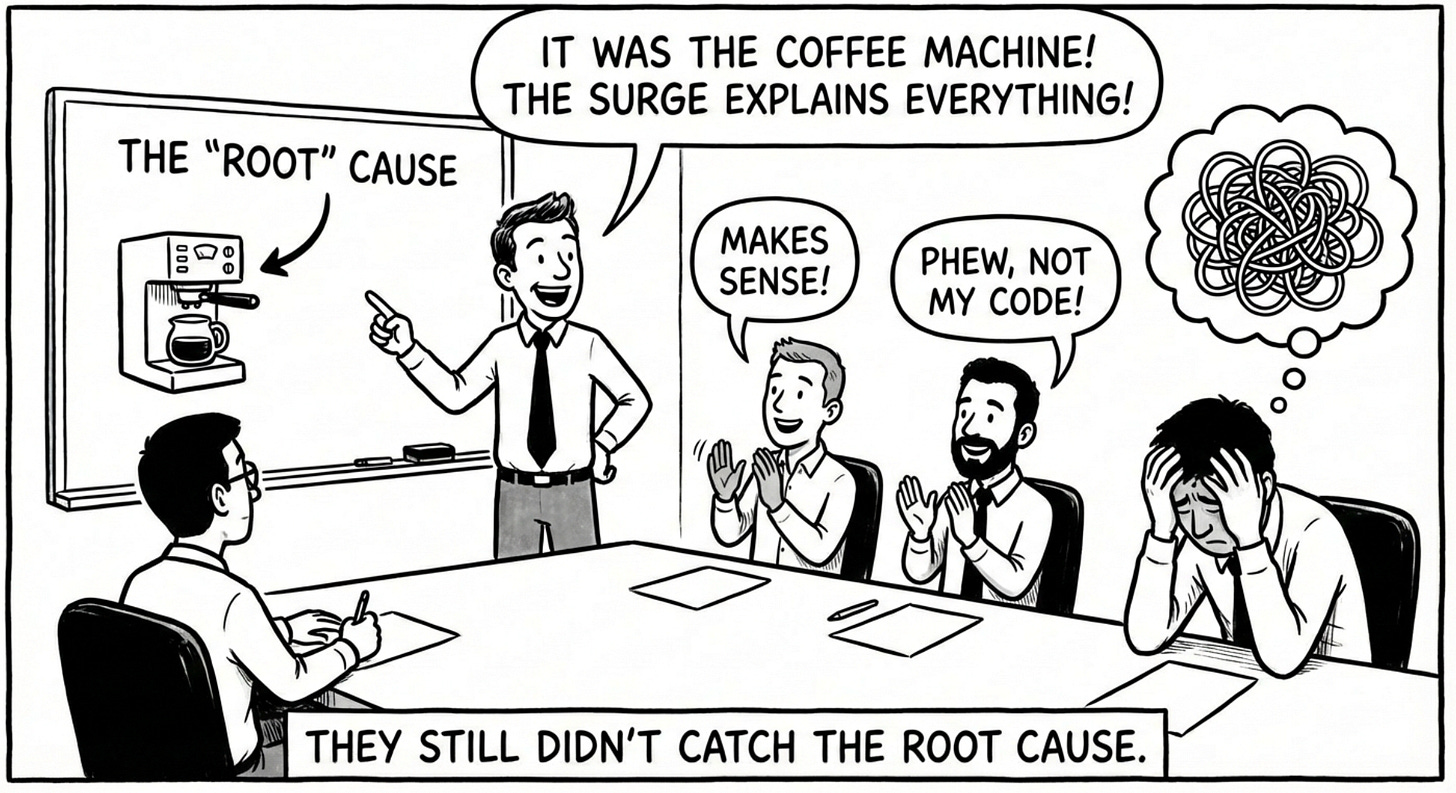

Every team like this eventually produces a well-intentioned deflector. Someone who, in the middle of an outage or a heated incident review, confidently declares: “This all happened because of X.” Maybe it’s a network blip. Maybe it’s a bad deploy. Maybe it’s “the cloud provider had an issue” The explanation is plausible enough that nobody challenges it, specific enough that it sounds like a root cause, and shallow enough that it doesn’t threaten anyone’s assumptions. Leadership hears it, writes it down, moves on. The engineers are relieved.

because now everyone is looking in the same direction and that direction isn’t at them.

I’ve seen this play out with noisy neighbors more times than I can count. Service is slow. Latency is spiking. Someone says “it’s noisy neighbors, we need isolated nodes.” It sounds right. It sounds like infrastructure wisdom. So the team escalates, gets dedicated capacity, and for a while things seem better, maybe because the deploy also happened to restart the pods, maybe because traffic patterns shifted. Then the latency comes back. So they ask for more isolation. More dedicated nodes. The bill goes up, the problem persists, and six weeks later someone finally profiles the application and finds a connection pool misconfiguration, or a missing index, or a goroutine leak that’s been there since day one. The noisy neighbor was never the problem. But it was a comfortable answer, and comfortable answers don’t get questioned.

The well-intentioned deflector isn’t lying. They might even be partially right. But “partially right” is the most dangerous kind of wrong in engineering, because it closes the investigation before it reaches the actual cause. And then, three months later, the same incident happens again. Maybe with a different trigger, maybe in a different service, but the same underlying pattern. Because the real root cause was never found. It was just too comfortable not to look.

The more I saw that pattern, across teams, across companies, across incidents, the more I came to believe that rigorous thinking isn’t just a pillar of engineering. It’s the pillar. Everything else, design, architecture, reliability, performance, is downstream of whether you’re building on verified reality or comfortable assumptions.

And right now, in the age of LLMs, it’s about to become the most valuable skill you can build.

The Interstellar Tax

In space navigation, there’s a principle called the 1-in-60 rule: for every degree you’re off course, you drift one mile for every sixty you travel. At aviation distances, that’s a minor correction. At interplanetary distances, it’s annihilation.

NASA’s Mars Climate Orbiter traveled 669 million kilometers over nine and a half months. One team at Lockheed Martin generated thrust data in pound-force-seconds. The navigation team at JPL assumed it was in newton-seconds. Nobody verified. Seven small errors accumulated across the journey. When the spacecraft arrived at Mars, it was 170 kilometers closer than planned - too close. It burned up. A $327 million mission, destroyed by a unit mismatch that went unchallenged for 286 days.

Every wrong assumption in engineering works like this. It’s not a static cost - it’s a trajectory error. The longer you travel on a false heading, the further you end up from reality. And by the time you discover you’re off course, the fuel to correct may already be spent.

I think about this compounding constantly. In the infrastructure world, I’ve seen teams spend months debugging network performance because everyone assumed cloud provider provided machines were fine. The assumption felt safe:

“it’s a managed component, it’s battle-tested, thousands of clusters run it.”

But assumptions don’t care about your feelings. That’s the interstellar tax. Every day spent traveling in the wrong direction is a day you have to travel back.

A Brief History of Expensive Assumptions

The wreckage of unchallenged assumptions is scattered across engineering history. The pattern is always the same: something was assumed, evidence was available, nobody checked.

Ariane 5 (1996) - Assumed Ariane 4 flight software value ranges would hold for a faster rocket. 64-bit to 16-bit overflow. $370M rocket self-destructed 37 seconds after liftoff.

Boeing 737 MAX (2018-2019) - Assumed pilots would diagnose and override a faulty sensor-driven system within three seconds. 346 people dead. ~$20B in losses.

Hubble Space Telescope (1990) - Assumed the primary measuring device was calibrated correctly. Two backup instruments flagged the error. Readings dismissed. $1.5B mirror polished to exactly the wrong shape. $700M to fix.

Challenger (1986) - Assumed O-rings would hold at 31°F despite engineers recommending no launch below 53°F. Seven dead, 73 seconds after liftoff.

Therac-25 (1985-1987) - Assumed software alone could replace hardware safety interlocks. Manufacturer called overdose “impossible.” Six patients overdosed, three dead over 19 months.

Knight Capital (2012) - Assumed a deployment script reporting “success” meant all eight servers were updated. One wasn’t. Dormant code from 2005 activated. $440M lost in 45 minutes.

CrowdStrike (2024) - Assumed their content validator would catch a field count mismatch. Template defined 21 fields, sensor provided 20. 8.5 million Windows devices blue-screened simultaneously. ~$5.4B in losses.

AWS S3 (2017) - Assumed a routine capacity removal command had safe bounds and that restart procedures matched current scale. Took down ~20% of the internet for four hours. $150M+ in losses.

GitLab (2017) - Assumed their backups worked. Had five separate backup mechanisms. Zero functioned. Engineer ran rm -rf on production. 300GB of data lost. 18-hour outage.

Cloudflare (2019) - Assumed a regex passing CI was safe for global deployment. Catastrophic backtracking spiked every edge server to 100% CPU. 27 minutes of global outage.

Same pattern. Different decade, different domain. The assumption was reasonable. The evidence was available. Nobody verified.

Now Add LLMs to the Equation

Everything I’ve described above happened in a world where humans wrote their own code, did their own calculations, and made their own decisions about what to trust. The failure mode was always the same: humans not questioning their own assumptions. And even in that world, I watched people dodge hard questions in postmortems because the answers might be uncomfortable.

LLMs don’t create this problem. They accelerate it. And that’s exactly why the engineers who build real understanding right now will be more valuable than ever.

There’s a quieter version of this problem that nobody talks about. You make it up the ladder. You get the title, the scope, the org chart with your name near the top. And somewhere along the way, the systems you’re responsible for outgrew your hands-on understanding. You’re supposed to know, but you don’t. Not really. Not at the level where you could debug it yourself at 3 AM. So you develop instincts for when to nod confidently and when to delegate the question to someone who might actually know. This has always been part of senior engineering leadership, the gap between authority and understanding. But it used to be bounded by the fact that someone on the team had to write the code, had to understand it, had to make it work from scratch.

Now that gap can be closed with a prompt. And that changes what it means to be the person who actually understands.

With vibe coding and AI-assisted everything, the code actually works. That’s the interesting part. You prompt an LLM, you get a service that compiles, passes basic tests, handles the happy path. It looks like progress. It feels like velocity. But ask two questions and reality starts crumbling. Why Redis? Why can the server only handle this many requests? Why is this retry logic unbounded? The person who wrote it, who prompted it into existence, often can’t answer. Not because they’re bad engineers, but because they never had to confront those questions. The LLM absorbed them.

And here’s where it gets strange: the answers to those questions might come from the LLM itself. So now you have a human-bot interaction where the bot wrote the code and the bot explains the code, and the human in the middle is doing prompt engineering and interpreting the output. At some point you have to ask: what is the human actually contributing? If you’re not the one who understands why Redis, if you’re not the one who can reason about request capacity, you’re not engineering. You’re proxying. And you could remove the middle man entirely, just let the bot talk to itself, and the output would be roughly the same.

This is where the opportunity is. In a world where anyone can generate code, the person who can explain why that code is right or wrong becomes irreplaceable. The person who can ask the second question, the one the LLM can’t answer about your specific system, your specific failure modes, your specific constraints, that person is more valuable now than they’ve ever been. For the person who already felt the pressure to pretend they understood, LLMs are the perfect collaborator. They don’t judge. They don’t ask follow-ups. They give you something that looks right and let you move on. But for the person willing to actually understand, LLMs are the most powerful learning accelerator ever built. The difference is honest self-assessment about which one you’re doing.

More bugs. More confidence. That’s not a tooling problem. That’s an intellectual rigor problem.

The Assumptions Worth Questioning

LLMs introduce a new category of assumptions into your codebase. Not maliciously. Not even incorrectly, most of the time. But silently, and at scale. Knowing what to question is the skill.

Code that looks correct is not necessarily correct. LLMs produce code that reads like it was written by a competent human. It follows naming conventions, uses popular patterns, includes comments. This surface-level quality is genuinely impressive, and it’s also exactly what makes it worth scrutinizing. It passes the “does this look right?” filter, which was never a reliable filter to begin with. The engineer who reads it critically, instead of just visually, is the one who catches the issue before production does.

“It worked” does not mean “it’s right.” LLM-generated code often works for the common case. But engineering has always been about the cases you didn’t think of. The Ariane 5 code worked perfectly on Ariane 4 trajectories. The MCAS system worked perfectly until the sensor failed. The engineer who asks “what input would break this?” is doing the work that separates a demo from a production system.

Speed of production is not progress. When you can generate a thousand lines in minutes, it feels productive. But if you don’t understand those thousand lines, you’ve created technical debt at a speed that was never before possible. The engineer who slows down to understand what was generated, who treats the LLM output as a first draft rather than a finished product, is building something that will actually hold up.

The model does not understand your system. An LLM has no knowledge of your architecture, your invariants, your failure modes, your SLAs. It generates code based on statistical patterns from millions of other systems. It picked Redis because Redis appears frequently in training data next to the word “cache” not because it analyzed your access patterns and consistency requirements. It set the connection pool to 10 because that’s a common default, not because it profiled your throughput under load. The question is never “can the LLM write this code?” The question is “does this code reflect the reality of my system?” The engineer who can answer that, who understands the system deeply enough to validate or reject what the model produced, is the one who will be indispensable. But if the human’s understanding also came from the LLM, you have a closed loop with no ground truth anywhere in the circuit.

Honest Engineering as a Practice

I’ve come to see honest engineering not as a personality trait but as a set of concrete habits. Things you do before you trust, before you ship, before you declare something fixed.

Measure before theorizing. The Ariane 5 team assumed Ariane 4 ranges would hold. They never measured actual values for the new flight profile. In the LLM age, this means: don’t assume generated code handles your edge cases. Instrument it. Profile it. Feed it adversarial inputs.

Treat contradicting data as signal, not noise. Hubble’s backup instruments flagged the mirror flaw. The readings were dismissed. The Therac-25 operators reported burns; the manufacturer said it was impossible. When your monitoring says something different from your mental model, your mental model is wrong. This applies equally to AI-generated code that “should work” but behaves unexpectedly in staging.

Verify across boundaries. The Mars Orbiter failed at the interface between Lockheed Martin and JPL, each correct in isolation, wrong together. LLMs have no concept of your system boundaries, your team interfaces, your deployment constraints. Every piece of generated code that crosses a boundary, between services, between teams, between trust zones, needs manual verification.

Understand before you merge. If you can’t explain why the code works, you can’t explain why it won’t work. The 59% of developers shipping code they don’t understand are creating future incidents that nobody will be able to debug, because nobody understood the system they built. This was always true for copy-pasted Stack Overflow answers, but LLMs have industrialized the problem.

Make assumptions explicit. The Ariane 5 overflow decision was “obscured from external review.” If your LLM prompt assumes a specific data format, a particular library version, a certain deployment environment, write that down. Review it. Challenge it. Because the model won’t.

The Skill That Will Matter Most

LLMs are not going away. They’re going to get better. The code they generate will improve, the vulnerabilities will decrease, the tooling around them will mature. But none of that changes the fundamental dynamic: someone still needs to understand the system.

When you write code yourself, you encounter resistance. You hit compiler errors, test failures, logical contradictions. Each one is a micro-moment of reality testing, a forced confrontation with how things actually work. When an LLM generates code for you, those friction points disappear. The code compiles. The tests pass. Everything looks green. And the absence of friction feels like correctness.

But the absence of friction isn’t the absence of bugs. It’s the absence of your understanding of where the bugs are.

History teaches us that the most expensive engineering failures don’t come from ignorance. They come from false confidence, from teams that believed they knew enough, that the system was well-understood, that the assumption was safe. Every disaster I’ve described was built by brilliant engineers who were wrong about one thing and didn’t know it.

So here’s my advice, especially if you’re early in your career: build the understanding. Use LLMs, use them aggressively, but use them the way you’d use a senior engineer who’s brilliant but has never seen your codebase. They can draft, they can suggest, they can teach you patterns. But they can’t tell you whether those patterns fit your system. That part is yours. And the more people outsource that part, the more valuable it becomes for the people who don’t.

The engineers who thrive in this era won’t be the ones who can prompt the fastest. They’ll be the ones who can look at what the model produced and say, with confidence, “this is wrong, and here’s why.” Or, just as importantly, “I don’t know if this is right, and I need to find out before we ship it.”

That’s intellectual courage. It has always been the foundation of good engineering. In the age of vibes, it’s the whole building.

If there's one skill I'd want every engineer to build, one thing I'd want the next computer science curriculum to actually teach, it would be this: intellectual honesty. How to question what's in front of you, especially when it looks right. We're going to vibe. We're going to generate, ship, and iterate faster than ever. But the engineers who matter will be the ones who pause, question, and then vibe again, better.

References

Mars Climate Orbiter (1999) - $327M loss due to metric/imperial unit mismatch between Lockheed Martin and NASA JPL.

Ariane 5 Flight 501 (1996) - $370M loss, 37 seconds after liftoff. Integer overflow in reused Ariane 4 software.

Boeing 737 MAX / MCAS (2018–2019) - 346 deaths across Lion Air Flight 610 and Ethiopian Airlines Flight 302. Single-sensor design, flawed safety assumptions.

Hubble Space Telescope Mirror Flaw (1990) - $1.5B telescope launched with mirror ground to the wrong curvature. $700M servicing mission to correct.

Space Shuttle Challenger (1986) - 7 crew killed. O-ring failure in cold temperatures despite engineer warnings.

Therac-25 (1985–1987) - At least 6 radiation overdoses, 3 deaths. Software race conditions with no hardware safety interlocks.

Knight Capital Group ($440M loss, 2012) - Deployment error triggered dormant trading code, bankrupting the firm in 45 minutes.

CrowdStrike Channel File 291 Outage (2024) - A faulty sensor configuration update crashed 8.5 million Windows devices worldwide.

AWS S3 Outage (2017) - A mistyped command removed critical infrastructure and took down ~20% of the internet for 4 hours.

Gremlin: After the Retrospective - The 2017 Amazon S3 Outage

Network World: AWS Says a Typo Caused the Massive S3 Failure

GitLab Database Outage (2017) - An engineer accidentally deleted 300GB of production data; all five backup methods had silently failed.

Cloudflare WAF Outage (2019) - A single regex with catastrophic backtracking behavior spiked every edge server to 100% CPU globally.

LLM Code Security Data (2025)